MOVING AT THE SPEED OF INSPIRATION

In the fall of 2022 we started a journey that turned out to be a true pioneering effort in the democratization of AI/ML Deep Learning NLP models, and the building of a truly innovative app that we humbly believe will change market research.

But what do you do when you move your service based platform to a web app? More specifically, how do you take your in house deep-learning AI models and put them in a cloud, without paying exorbitant fees that we would have to pass on to our customers? It’s a tough set of questions that we had to answer.

Let me provide some of the challenging details:

- We currently have around 50 NLP models that do our predictions

- We additionally have several other ML and algorithmic analytic processing engines

- These things do some heavy crunching, and can take up a large amount of disk space. How do you move them from linux metal that sits in your data center, to a cloud platform that is tuned to handling sub-second, stateless applications?

- Our development team has over 50 man years of experience in a wide range of computing and we dove in, with vigor, to solve this equation.

Adding to the difficulty, our team is a fractional group of experts that have come together to do the research we do. This allows us to provide a special value, but with a fractional team, and only 6-7 months, we were up against a clock to accomplish it. What lay before us was an effort that one would want to have at least one year with a team of 5-6 to accomplish. This team is used to monumental, you could even say existential, challenges.

Just a little under two years ago, IBM’s Watson organization announced they were sunsetting classification prediction products that we were squarely counting on to do our NLP scoring. At the moment of the announcement we had no “plan B” to pivot to. Given our team has led business units in global tech companies, we knew the analytical products we were using were at high risk, because they were cutting edge, and not mainstream tools that would generate significant cash for a large company like IBM. We had been researching and building AI in the labs for a couple years, but nothing gave us the accuracy, at the data volumes we were collecting, that a) compared to Watson, and b) were adequate to replace the current approach of our production system. This was a rigorous discovery process for us. We had many attempts, and mostly disappointments. Our biggest problem was the fact we didn’t have enough data (or resources) to train a quality deep learning model to the accuracy we needed. Then, what felt like out of nowhere large language models showed up on the horizon. We were just a little ahead of the curve and it caught up.

These new large language models like BERT, Elmo, GPT, and XLnet trained a language model on “word to word” relevance using millions upon millions of data points. And they create a new class of AI called “transfer learning” or “transformers”. Transfer learning is when you train a model and then retrain just one layer of the entire model to specifically match your domain. An example may be a large model trained on all animals, then you transform it by training it specifically to focus on cats. This approach requires much smaller data requirements to successfully train the transformation layer. There are still concerns the industry is considering with large language models, such as how inherent biases impact results, but we needed to trigger cloud functionality, with a healthy dose of content, run our predictions and have the routine return a result. This was something easy to do in the old client/server world where you owned all the equipment, but almost impossible in a cloud model, w/o going to an expensive platform, that could price our product out of the reach of our customers. We are also a big open source shop, and what tech platforms we back are important to us. (GCP, Firebase, Nuxt,Vue, Node.js, Python)

We were getting a little frustrated, and tired of brick walls, when Scott on our team revisited firebase cloud functions and found they had done a just in time release from Firebase that would provide us a path through. The Cloud Functions we were putting our models in would now provide a python platform for development. HUGE! This allowed us to deconstruct our old batch process of calcs into a web-based approach, but leverage our python-based IP. We would move our engine, with a bit of remodeling, to a more real-time, individualized analytics platform. This porting process increased our speed of progress.

We had found the perfect spot for our technical stack, but what we were doing, we don’t believe had been done before. There was nothing out there that said it would work, but theoretically it seemed it would. We would have to step forward with a sense of faith that we could use it to compliment our stack. To GCP, Firebase, Nuxt, Vue we added Firebase Cloud Functions and the AI open source product “Replicate” were our missing pieces to our puzzle. Yet, we were going to have to carve them to fit ourselves.

We embarked, endured, fought, endured… endured some more, and eventually emerged from the primordial ooze that is web-app cloud-based custom deep learning models, and found a righteous offering that changes everything. Pioneering is painful. Let me give you an example. Firebase Functions has a local emulation solution that doesn’t work, period. So the only way to test is to deploy the function, which takes about 5 mins. In a normal development environment, you save code, run it, and get an error. This takes 30 seconds. You fix the error and move on. In a new piece of code you may have 10-30 little errors to correct each time. So now imagine you have to deploy it to test. 5 mins * 10 = 50 mins vs .5 * 10 = 5 mins. But, to fix an error you may have to test things let’s say it takes you 5 tries to get it right, now 10 errors become 50: 50 * 5 mins = 250 mins (>4 hrs) vs 25 mins. So code sets that should take a half a day, had become 4 + day sagas. Remember we are trying to do things no one else has done, it’s full of attempts and rethinks. Still, we endured.

We also had to think about what we love and hate about today’s contemporary web-app. You know that collection of things you incessantly yell at every day. If we are building an app to connect normal people and how they feel about companies and brands to companies and brands, we need a tool that embodies the brilliance and simplicity we believe everyone is craving. Even more, how could we enable the sponsors of research, and the consumers/storytellers themselves to take the controls and begin to push the research in new directions?

Well, a crazy thing happened. Everybody loves the experience. I can tell you my heart sat tense for a few months hoping all the work would meet a user audience that would even consider using the toolset, turns out they will, with vigor.

We obliterated Pandora’s box. We broke it all, and as we put it back together (the decomposed components of over 5 years of work), we started seeing new better ideas and new approaches. For example we saw a perfect model for agile, we saw a way to speed and reduce the cost of consumer research at a shocking level. We also found ways for consumers to get more intimate and connected to the companies they use.

Breaking the Glass

We have begun to break the glass of traditional market research. The awesome problem is our customers didn’t budget for this, because they had no idea they could have it. We were doing something no one had done. So, how do you get user feedback for a validated learning process? For example, ask your consumer: “How do you want to feel when you breathe water?” Most of them would just look at you funny. No one currently sees a need to breathe water. Same is true in app development. If you know your customer and domain, you can take risks to truly innovate beyond what can be explained as needs. This is one of the key premises of our web app and product. Brands can be their consumers’ agent, if they know them. It is a very profound market position to claim.

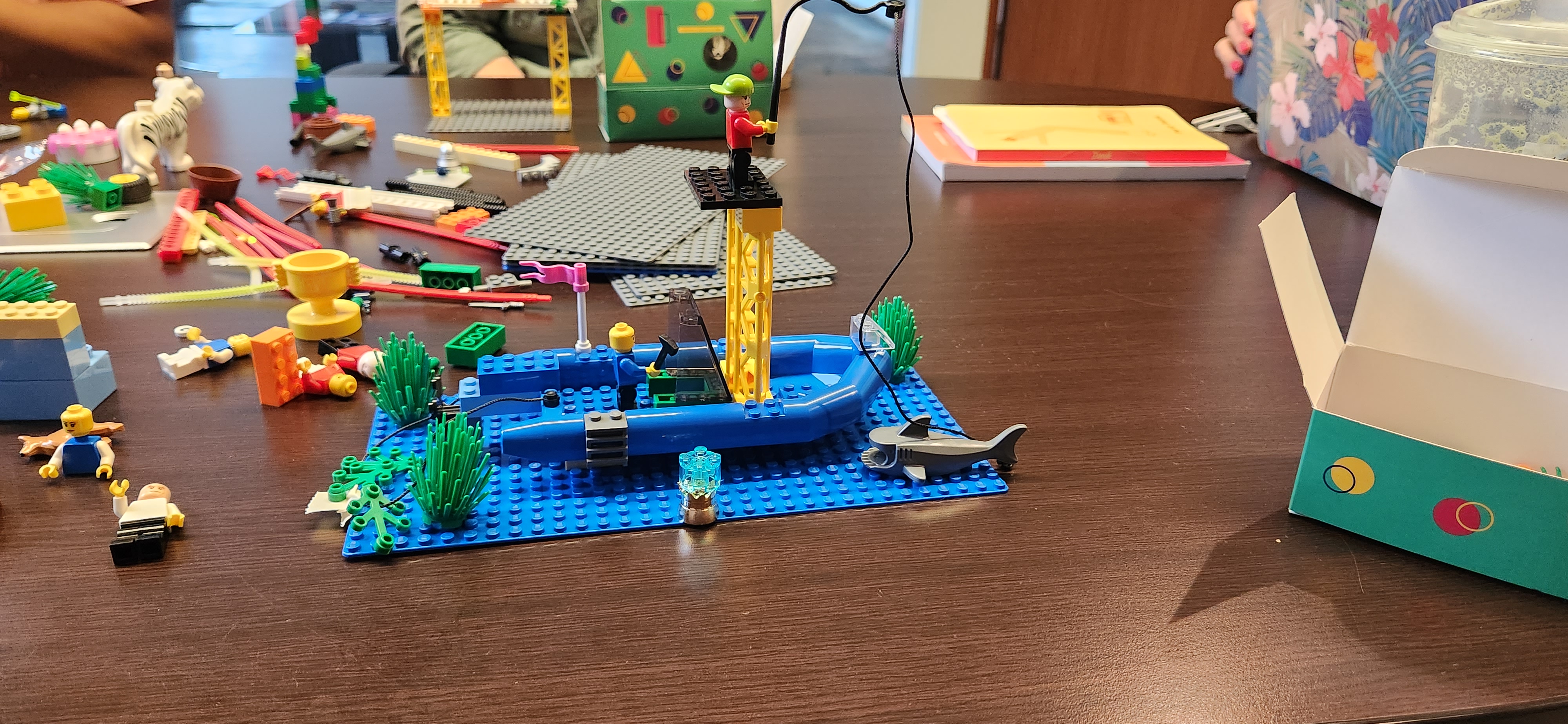

Caption: Scene from “The Abyss” when one of the salvage

divers relearns breathing oxygen in liquid form.

Some companies fear innovation. The [research] elephant in the room is the comfortable fact that no matter how bad current research is, if you are level with your peers and competitors, you’ll be okay; but the world needs us to be better than this. There is “blue water” available to us. Good news, now you can do that with less risk and more results with SEEQ App.